WARNING: This post describes uses and modifications of the Oculus Quest headset that are not recommended or sanctioned uses of the Quest hardware, software, or technology. Pursuing such uses or modifications may increase the risk of serious injury or property damage. If you try to replicate these or similar actions, you do so at your own risk.

https://www.youtube.com/watch?v=XeCQCxKMkiI

My last update was over 6 years ago and it probably seemed like the project was dead, but I've got a new post today. In my last update I wrote

"

just recently I accepted a job at Oculus (thanks again Palmer), so I'm going to be shutting down this project indefinitely to concentrate on my new job...

...I want to continue this research at some time in the future and my hope is that by surrounding myself full-time with VR technology and experts, I can develop a much better implementation."

So what has happened since then? Well, I'm still at Oculus having worked in a variety of areas and on loads of great projects, but I've had very little time to continue this locomotion hobby. After the first few Rovr iterations it became clear to me that some sort of dead-reckoning system was the only way to go - most likely either an inertial or a computer vision system. At first I focused on an inertial suit because it felt like the easier solution to implement. I backed the PrioVR Kickstarter in hopes that I could get a ready-made suit but that project failed, so I tinkered a little with building my own IMU pants but couldn't really find the time or motivation, especially since I felt the system would be compromised by drift and accuracy. What I really wanted was a computer vision system but it was out of my technical comfort zone. I had hoped to join the computer vision group at Oculus to acquire the skillset to work on it, but that move never materialized so the project remained on ice - or almost. For each headset iteration I would retrofit the previous Rovr. I made a DK1, a DK2, and a CV1 upgrade of the original IMU/GPS system just for fun, but the performance never really improved and nothing interesting really came of it.

Then the Santa Cruz project started to come together. This was exactly what I had been waiting for and I didn't have to do anything - just wait for others to solve all the hard problems. I was a little skeptical that the tracking performance would scale but even early versions far exceeded my expectations, so once Quest started to reach a mature state I finally sat down to implement an improved version of redirected walking. The video above shows the results. Conceptually it's identical to the previous Red Rovr but the precision and latency are orders of magnitude better. The older version was essentially a gesture system. You had to move with exaggerated intent to start a delayed glide and then align your walking speed with the character in order to achieve a sense of agency and presence. The Quest version just works. You move through the world naturally without conscious effort or compromise and the longer you go, the more transported you feel. Except for the non-engaging content shown above, this is the most pure and immersive type of VR experience available.

I used a stock Quest headset and 2 decks of playing cards thrown randomly on the ground to provide some visual contrast for tracking. The Quest is not recommended and does not work well outdoors, but I filmed on a fully overcast day to avoid camera saturation and potential damage and also to allow for some controller tracking. Under these conditions headset tracking works, but controller tracking was only viable for basic operation.

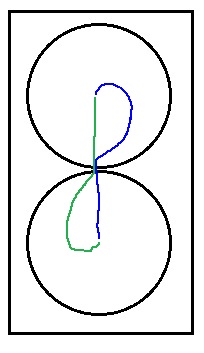

The redirection algorithm is bare-bones and largely unchanged. It simply tracks the clock direction of motion relative to the field center and rotates the virtual world around the player in the same direction. There are a variety of tricks to accomplish tighter redirection in specialized scenarios but I have always been interested in the worst-case: continuous exploratory movement through non-customized content. For that scenario this example field size of 40m x 40m is a bit small. I used a 4 deg/sec turning speed here and still hit the boundary more frequently than I would consider acceptable. For me 4 deg/sec is still perceptible and others that have tried it commented that it made them feel slightly drunk. So I still agree with my findings from earlier versions: 2 deg/sec feels optimal and likely a field size from 60m^2 to 80m^2 is ideal to avoid frequent edge collisions. This all seems technically possible with the Quest, but it does make finding a play space more challenging. Again, this is for the most challenging case - imperceptible redirection during continuous motion. Distracting content or content that encourages slower more deliberate motion (ie. indoor worlds) can work in smaller play spaces and there is a body of research that covers all of these variances and specialized techniques.

https://illusioneering.cs.umn.edu/paper ... ga2018.pdf

But I would still not suggest that basketball courts are sufficient (much less a large room) for a great experience.

One big improvement is the new boundary enforcement - a custom system implemented in-app after disabling the Oculus Guardian. My previous boundary enforcement attempted to quickly spin the world to bring you back into the play-field, but that had the disadvantage of not giving environmental context and also producing discomfort. The new one works like other guardian systems in that it displays your real-world boundaries once you get close to the edge (the red zone). But within the red zone it also face-locks the virtual world to your head direction. That allows you to quickly turn the world to align better with your real boundary and start moving again in an optimal direction. The optimal direction is rarely towards the center of the field but instead lies along a shallow angle entering back into the play-field. This tangential path will tend to send you along a circle around the field center. To avoid discomfort and dizziness associated with face-locking, a full boundary floor is rendered during turn correction to give you a stable ground reference.

All-in-all I am satisfied with this system and it's pretty much what I had envisioned long ago when I started this thread. I'm closing this project now since there seems little reason to pursue an external redirection system when it's so easily achieved now with a couple hundred lines of code onboard an integrated $400 headset and 5 minutes of setup. That's probably the most shocking realization to me - the incredibly low cost and ease of this experience now. Even two years ago an experience with this scale and fidelity would have cost 100's of thousands of dollars and weeks of setup to achieve. The speed of progress that VR has undergone in the last 7 years is amazing and I feel incredibly lucky to have been a participant and first-hand witness to the rebirth and progression of VR.